Where first argument is an object - parameters, and the second argument is a callback function.

Instead of const response = getS3Objects(bucket,objectKey) you want to do getS3Objects(bucket,objectKey).then(response => console.log(response)) įurthermore, your usage of s3.getObject function is incorrect. This could result in excess Amazon S3 egress costs for files that are. However, only those that match the Amazon S3 URI in the transfer configuration will actually get loaded into BigQuery. All Amazon S3 files that match a prefix will be transferred into Google Cloud. The part above will still return a promise object, which means that you need to handle it accordingly. In this tutorial, you will learn how to partition JSON data batches in your S3 bucket, execute basic queries on loaded JSON data, and optionally flatten. The Amazon S3 API supports prefix matching, but not wildcard matching. Also their format & line endings are inconsistent: some objects are on a single line, some on many lines, and sometimes the end of one object is on the same line as the start of another object (i.e.I understand what you are trying to accomplish here but that is not the right way to do it. These files all describe similar events and so are logically all the same, and are all valid JSON, but have different structures/hierarchies. These files are put there by AWS Kinesis Firehose, and a new one is written every 5 minutes.

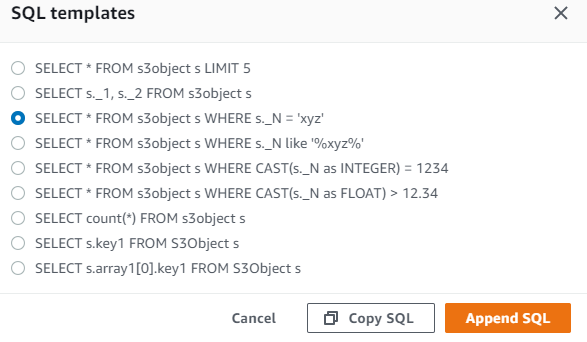

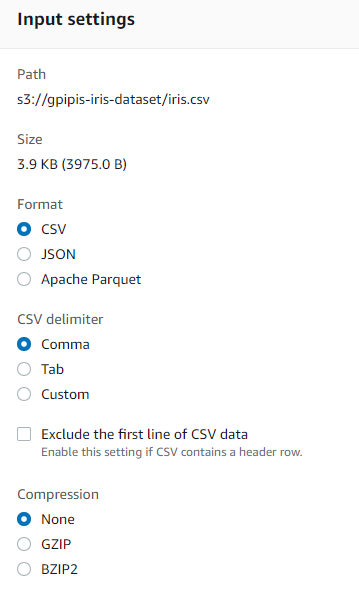

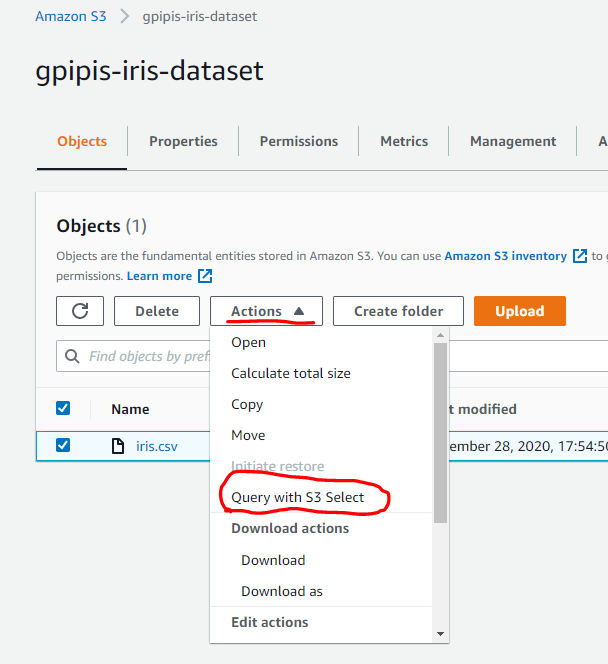

Query Language and supported aggregations to analyze data in the S3 bucket. The selectObjectContent API allows to easily query JSON and NDJSON data from S3. The /metrics/hardware path stores JSON files with metrics derived from the. Log into your AWS account via Console, navigate to S3 service, then inside a bucket of your choice (in our case query-data-s3-sql, remember it needs to be globally unique), upload sample.json file. Step 1: Go to your console and search for S3. Let’s see how easily we query an S3 Object. Athena is based on the Presto distributed SQL engine and can query data in many different formats including JSON, CSV, log files, text with. So I tried to create a table: CREATE EXTERNAL TABLE example ( key1 string, key2 string, ke圓 int ) ROW FORMAT serde '.data.JsonSerDe' LOCATION 's3://my-bucket/'. Query JSON and NDJSON files on Amazon S3. The S3 Select supports CSV, GZIP, BZIP2, JSON and Parquet files. They had two potential solutions: Replicate all the data into a single bucket, effectively creating multiple copies sync’ing and hence extra costs would be incurred, and account and role management would be complex to maintain. Reading the data into memory using fastavro, pyarrow or Pythons JSON. Your queries are expressed in standard ANSI SQL and can use JOINs, window functions, and other advanced features. They had multiple AWS accounts with multiple S3 buckets and they wanted to query JSON files stored across them. They can query data accross data files directly in S3 (and HDFS for Presto) and. And, now to the TypeScript function that can query on that JSON array object. Athena includes an interactive query editor to help get you going as quickly as possible. Read JSON files from Amazon S3 Buckets using familiar SQL Query language Integrate insight any ODBC Compliant Reporting / ETL tools (e.g.

We have an Amazon S3 bucket that contains around a million JSON files, each one around 500KB compressed. With Amazon S3 Select, you can use simple structured query language (SQL).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed